A new documentary just made me think harder about AI than anything I’ve read in months, and I didn’t love the experience. Ghost in the Machine, directed by Valerie Veatch, is coming to streaming this week, and it makes an argument worth engaging with even if you end up disagreeing with parts of it.

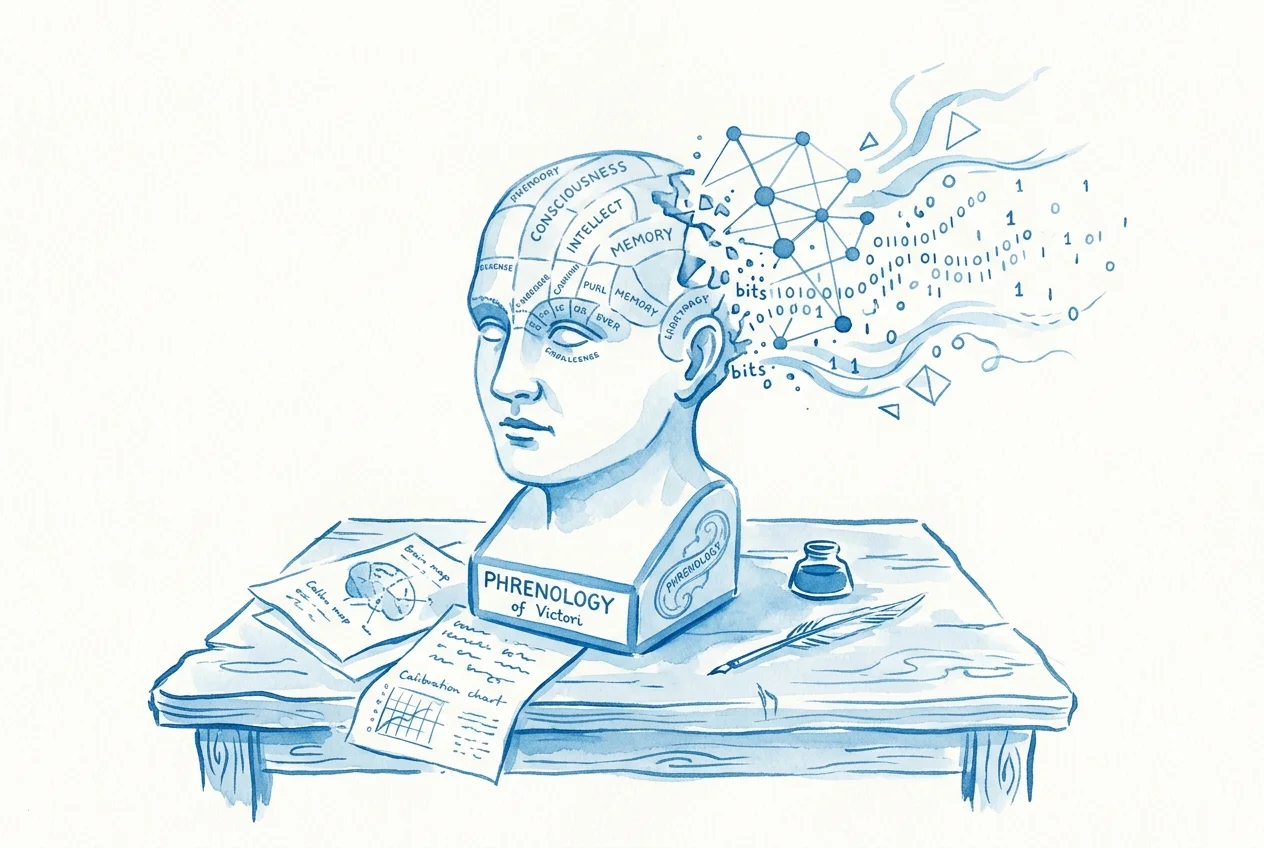

The film’s thesis is that generative AI’s statistical foundations trace back to Francis Galton, the father of eugenics, and that lineage isn’t just historical trivia. It’s part of why these models behave the way they do now.

Some of the argument is a stretch. Some of it is uncomfortably on point. Both of those things are true, and both are worth your time.

The Part That’s Hard to Argue With

Veatch’s entry point into making this film is genuinely damning. She was part of an early Sora artists’ Slack group where a woman of color kept getting whitewashed by the model. She’d prompt images of herself in an art gallery, and the system would keep her braids, keep her fashion, but make her white, because it understood “art gallery” as a white space.

When Veatch flagged the issue to the group, nobody engaged. When she brought it directly to OpenAI, the response was essentially: “This is cringe to bring up. There’s nothing we can do.”

That’s not ancient history. That’s a company choosing not to fix a known problem with its product. And if you’re an author using AI image generation for book covers or character visualization, this matters to you in a very direct way. The models carry biases. Sometimes those biases are subtle. Sometimes a woman starts “growing extra tits and twerking after two rounds of generating a scene,” which is a direct quote from the interview and not something I expected to type today.

The Part That’s Worth Thinking About

The documentary’s bigger claim is that the statistical tools underpinning machine learning (logistic regression, multidimensional modeling) were developed by people who were explicitly trying to quantify racial hierarchies. Galton measured the “attractiveness” of African and European women as part of his research. His protégé Karl Pearson built statistical tools that are still fundamental to how machine learning works, and he built them in service of proving that races were quantifiably different.

Does that mean logistic regression is inherently racist? No. Math doesn’t have politics. But the film’s point is more nuanced than that: the reason we think of human intelligence as something measurable and machine-replicable has roots in people who were trying to rank human beings. The concept of “artificial intelligence” requires you to first believe that intelligence is a discrete, quantifiable thing, and that belief has a specific origin story.

I find this genuinely interesting to sit with. Not as a reason to stop using AI tools, but as context for understanding why the people building them seem so allergic to discussing bias. If the foundational assumption is that intelligence is a clean, measurable quantity, then messiness, bias, and cultural context aren’t bugs to fix. They’re inconveniences that don’t fit the model.

Where I Think the Film Overplays Its Hand

Veatch says she had no interest in speaking with anyone from the companies she’s criticizing. “Am I going to hug Sam Altman on camera? Is that a truthful film about this technology? That’s propaganda.”

I get the impulse, but that’s also a choice that makes the film easier to dismiss. You can make a devastating critique and still let the other side speak. Refusing to engage with your subject isn’t the same thing as refusing to be co-opted by them.

That said, the AI companies have done themselves no favors here. When your response to documented racism in your product is “this is cringe to bring up,” you’ve forfeited some of your right to complain about not being included in the documentary.

Why This Matters to Indie Authors

You’re an indie author using AI tools. You didn’t build the models. You didn’t set the training data. You’re trying to write better books and run a business without a traditional publisher’s resources.

This history isn’t your fault. But it is your context.

Your AI image generator consistently defaulting to white faces isn’t random. Your writing assistant struggling with dialects or cultural references outside a narrow Western default isn’t just a training data gap. There’s a reason these tools work the way they do, and understanding that reason makes you a better user of them.

Nobody’s saying feel guilty about using AI. Just pay attention to what it’s doing.

Ghost in the Machine streams on Kinema from March 26th through the 28th, and will air on PBS this fall. Whether you agree with every thread it pulls or not, it’s asking questions that the people selling you these tools would prefer you never thought about.

Sources

- The gen AI Kool-Aid tastes like eugenics — The Verge interview with Ghost in the Machine director Valerie Veatch

- Ghost in the Machine acquisition and trailer — Deadline coverage of the documentary’s distribution deal