The federal government is trying to crush Anthropic, the company that makes Claude, because Anthropic had the audacity to say “maybe don’t use our AI for everything military.” A federal judge looked at what was happening and said, on the record, “It looks like an attempt to cripple Anthropic.”

If you use Claude to brainstorm, outline, draft, or generally make the business of writing books less lonely… this one’s worth your attention.

What actually happened

Anthropic tried to put restrictions on how the military could use its AI tools. The government didn’t like that. So the Department of Defense (which now calls itself the Department of War, if you’re keeping track) slapped Anthropic with a “supply-chain risk” designation.

That’s a big deal. It’s the kind of label normally reserved for foreign adversaries and hostile actors. It tells every government contractor not to do business with this company. Defense Secretary Pete Hegseth went further, posting on X that no military contractor could conduct “any commercial activity” with Anthropic. His own attorney later admitted in court that Hegseth has no legal authority to enforce that. (Whoops.)

When the judge asked why Hegseth posted it anyway, the government’s lawyer said, “I don’t know.”

Cool cool cool. Very reassuring.

Anthropic filed two federal lawsuits calling the designation illegal retaliation. During the hearing, Judge Rita Lin said the designation “doesn’t seem to be tailored to stated national security concerns” and noted it looked like punishment for Anthropic bringing public scrutiny to the dispute. That, she pointed out, would be a First Amendment violation.

The Pentagon’s stated worry? That Anthropic might sabotage its own software so it doesn’t work the way the military wants. Anthropic says it can’t even update its models without Pentagon permission. The government’s lawyer said he didn’t know if that was true. A lot of “I don’t know” coming from the side with all the tanks.

Why this matters if you’ve never held a government contract

You use Claude to help you write books. You use it to summarize research, punch up dialogue, brainstorm series arcs, generate marketing copy. Maybe you’ve built it into your whole workflow. Some of you have told me it’s the most useful tool you’ve added to your process in years.

Now imagine the company behind that tool gets financially kneecapped by the federal government. Not because of anything wrong with the product. Not because of a security flaw. Because they told the military “we’d like some limits on how you use our AI” and the military decided to make an example of them.

Anthropic’s customers are already getting skittish. The company is seeking an emergency court order partly because it needs to stop the bleeding while the case plays out. If Anthropic takes a serious financial hit, that affects product development, model quality, pricing, long-term viability. It affects you.

The bigger picture is uncomfortable

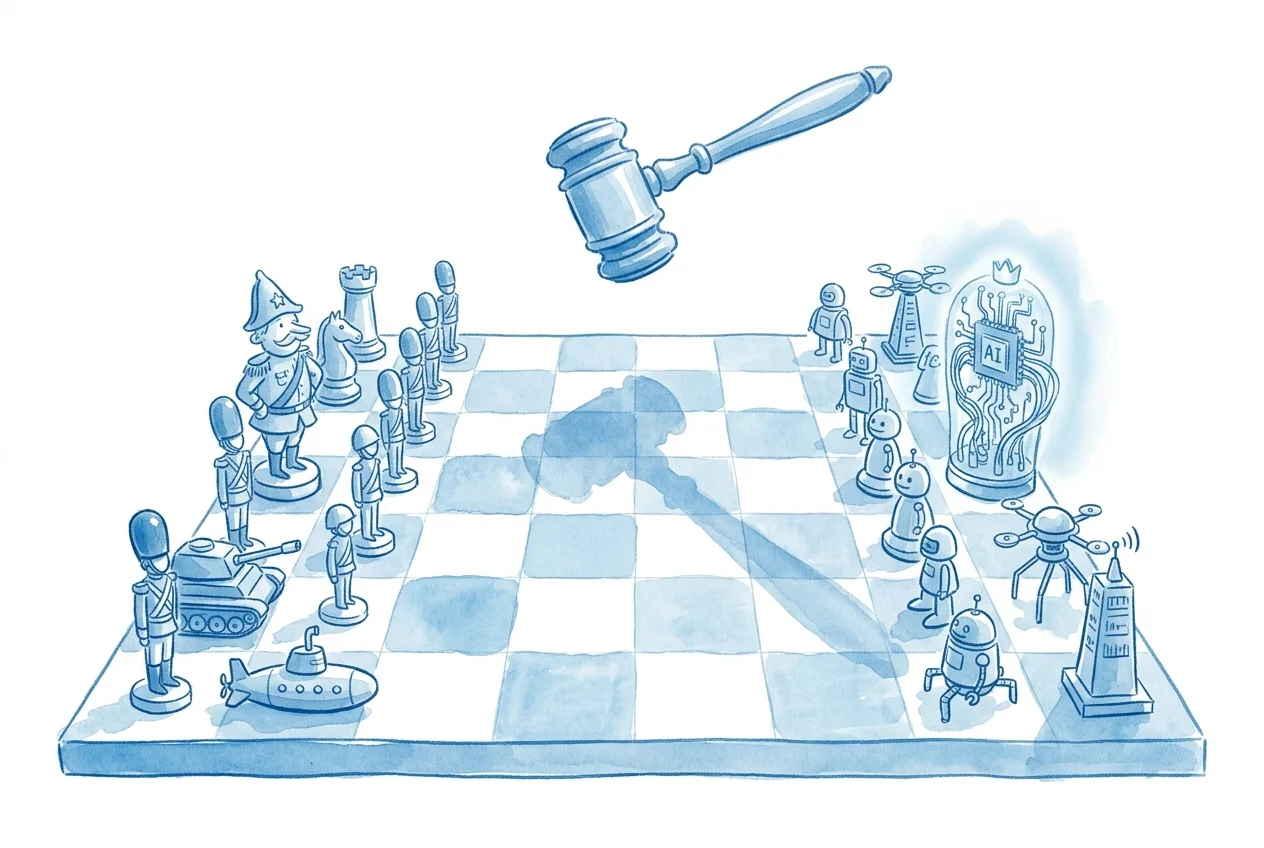

This case surfaces a question that’s going to keep coming back. Who decides how AI tools get used?

The companies building them? The government? The market? Some combination that nobody’s worked out yet?

Anthropic has positioned itself as the “responsible AI” company. You can argue about whether that’s genuine conviction or smart branding (I think it’s both, which is fine). But whatever their motivation, they tried to set boundaries on military use of their technology, and the government’s response was to threaten their entire business.

That sends a message to every other AI company. Fall in line, or we have tools to hurt you that were designed for foreign adversaries, and we’ll use them on you instead.

Whether you think Anthropic was right to restrict military use or not, the mechanism of retaliation here should bother you. If the government can designate any domestic AI company a “supply-chain risk” as punishment for disagreeing with how their tools are deployed, that reshapes the entire industry. It pushes AI development toward companies willing to build whatever the government wants, however the government wants it. Not exactly the kind of market that produces great creative writing tools for indie authors.

What to actually do with this

Nothing dramatic. Keep using the tools that work for you. But maybe don’t sleepwalk through it.

Don’t build your entire workflow around a single provider. This is good advice regardless of the Anthropic situation. If Claude disappeared tomorrow, could you keep working? You should be able to answer yes. Know what your backup tools are. Test them occasionally.

Pay attention to this case. The ruling on the injunction will signal how much legal protection AI companies have when they disagree with the government. That precedent affects every tool you use.

Being “pro-AI” doesn’t mean being uncritical of everyone in the AI space. You can believe these tools are transformative for indie authors and also believe that governments bulldozing AI companies into compliance is worth watching carefully. Both of those things can be true at the same time.

The Pentagon wants to replace Anthropic with Google, OpenAI, xAI, and whoever else will play ball. Those are fine companies with useful products. But the reason for the switch isn’t technical superiority. It’s compliance. And a market where the most compliant company wins the government’s blessing isn’t necessarily the market that produces the best tools for you.

Meanwhile, in a quieter corner

Speaking of AI tools doing useful things (because I refuse to end on government drama), a barrister in the UK named Anthony Searle has been using ChatGPT to prepare sharper questions in coroner’s inquests. These are cases where families are trying to understand how their loved ones died. The courts are underfunded, independent expert reports get denied, and Searle found that AI research tools helped him ask better technical questions about surgical procedures.

He’s careful about it. No client data goes into the tools. He verifies everything. He’s also building custom apps for calculating damages in negligence claims. Small, practical stuff. Someone using AI to do his job better in a system that doesn’t give him enough resources to do it the traditional way.

Sound familiar? You don’t use AI because you’re lazy. You use it because you’re one person trying to do the work of a small team, and these tools make that possible. Legal briefs, romantasy series, it doesn’t matter. The dynamic is the same.

So yeah. Pay attention to who’s trying to control that tool.

Sources

- Pentagon’s ‘Attempt to Cripple’ Anthropic Is Troubling, Judge Says — Wired’s coverage of the March 24 court hearing in Anthropic v. Department of Defense

- AI is beginning to change the business of law — Ars Technica on barristers using AI tools in legal practice